Modern technology tends to have a way of instilling paranoia.

Right now, your smartphone knows exactly where you are, what kind of websites you like to frequent and can, with the push of a button, answer even the most trivial of questions.

Aside from ruining the time-honoured tradition of the Pub Quiz, our headlong dash towards the technological singularity has raised some more troubling issues.

Thirty years ago, anyone who took The Terminator as anything more than an action movie with an interesting premise would have been seen as a paranoid technophobe, but now, with the advent of military robots, things are getting a little bit more uncomfortable.

We’re not saying that the machines are going to kill us all any time soon, or even that the events of the Terminator movies are in any way connected to reality (the muscular, naked Austrian men in the Sick Chirpse offices are there for entirely unrelated reasons), but last month a U.N. politician raised the question of just how safe it is to be creating murder-bots.

☛ More Robots: Tokyo’s Giant Robot Is The Coolest Thing We’ve Ever Seen

Christoph Heyns, a Special Rapporteur to the U.N. on extra-judicial killings, addressed the Human Rights Council on the subject of “Lethal Autonomous Robotics,” or machines that are able to kill without direct human input once they are switched on.

It could be argued that this kind of technology has been with us for a long time; what is a land mine if not a machine which kills indiscriminately once activated? Come to that, what is a basic deadfall trap, a mechanism invented thousands of years ago?

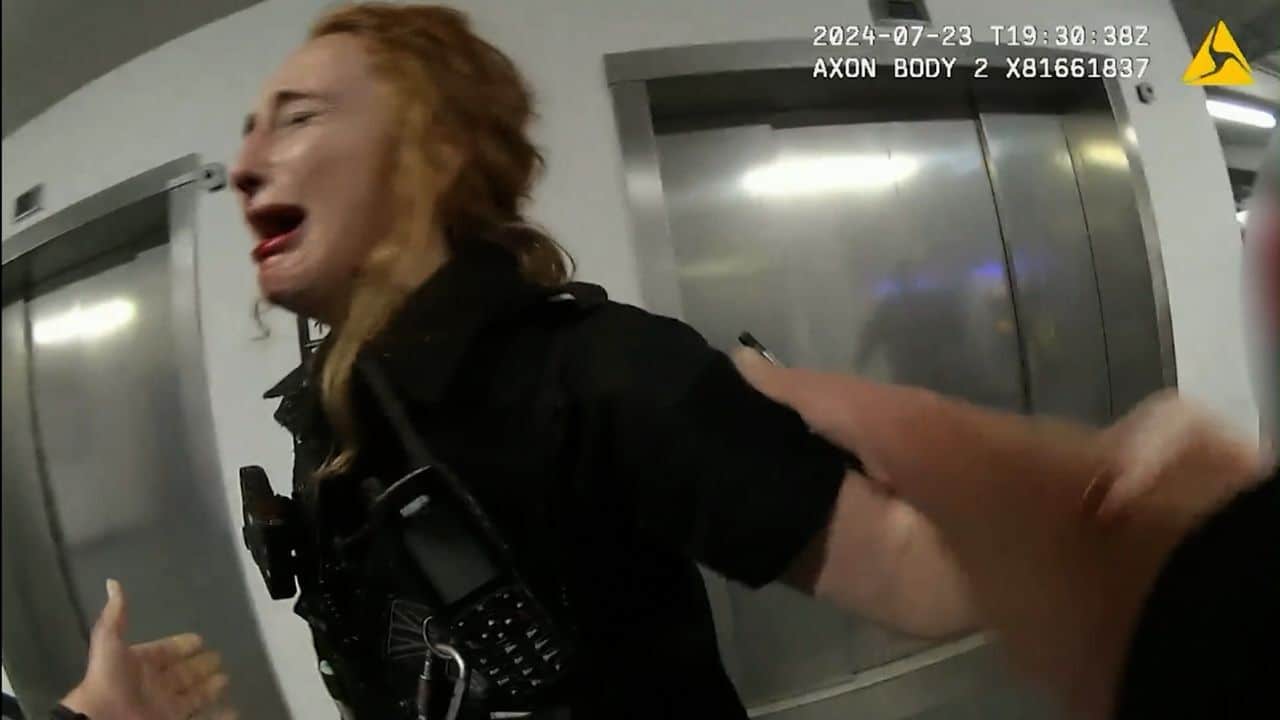

Still, what concerns Mr. Heyns is the evolution of machines such as aerial drones – once only used for surveillance, now used to bomb people, and in the future, in Mr. Heyns’ eyes, possibly used as completely un-monitored killing machines.

The over-riding concern is one of basic humanity. Mr. Heyns claims that, should we successfully build a completely autonomous drone, it would lack empathy. It could be programmed to, say, kill anyone who crosses into a certain area of land, but wouldn’t differentiate between an Al Qaeda member loaded with semtex and a lost girl scout loaded with cookies.

☛ Less Terrifying Flying Machines: Dominos Launch The Dominocopter

On that logic, it’s a valid concern. Anything that can be set to “kill” and then left to its own devices is a bad idea – from a flying drone to a rottweiler, it usually only leads to trouble.

On the other hand, Mr. Heyns should really take a look at the statistics behind existing drone strikes. At present, conventional, remote-piloted drones are hideously inaccurate, or at least they kill far more innocent bystanders than they do “terrorists.” One study estimated that drone strikes kill fifty civillians for every legitimate target.

If that weren’t an appalling enough statistic, the CIA (the biggest fans of drone attacks) has been known to engage in “double tap” strikes – bombing an area, waiting a few minutes and then bombing it again – in an effort to take out stragglers, rescuers, or anyone who has just turned up to see what the enormous explosion was just now.

These reports don’t exactly reek of human empathy, perhaps because the people pulling the trigger tend to be hundreds of miles away, staring impassively at a video monitor.

More depressingly, it could just be that after years of extended slaughter, the American millitary-industrial complex couldn’t give a fuck about one more crowd of brown people that’s just been obliterated.

With that in mind, maybe Christoph Heyns should stop focusing his attention on abstract concepts and look at the here and now. Given the lack of empathy shown by the average drone controller, could Skynet really be that much worse?!

☛ What We’d Use Robots For: NASA Mars Rover Accidentally Draws Penis On The Surface Of Mars