In this day and age, racism has no place in any part of society. A couple of bigot robots had other ideas though.

Featured Image VIA

In was a simple and straight forward concept: Hold a beauty contest in which Artificial Intelligence judges participants on purely objective factors like shine of hair, facial symmetry, and amount (or maybe lack of) monobrow.

Image VIA

The catch? Out of 600 participants the consequent 44 winners were predominantly white, with a sprinkle of Asian, and just a single, solitary dark skinned winner. The contest understandably quickly came under a metric fuck ton of mockery and scrutiny, with blame falling under the algorithms created for the machine to determine what turned its software into hardware.

The design team behind the K.K.K initiates machines, ‘Youth Laboratories’, who are supported by Microsoft, claim that although that many pictures of minorities were used in the data for algorithm, the number of caucasian entries outweighed them, skewing the robots perceived measures of attractiveness.

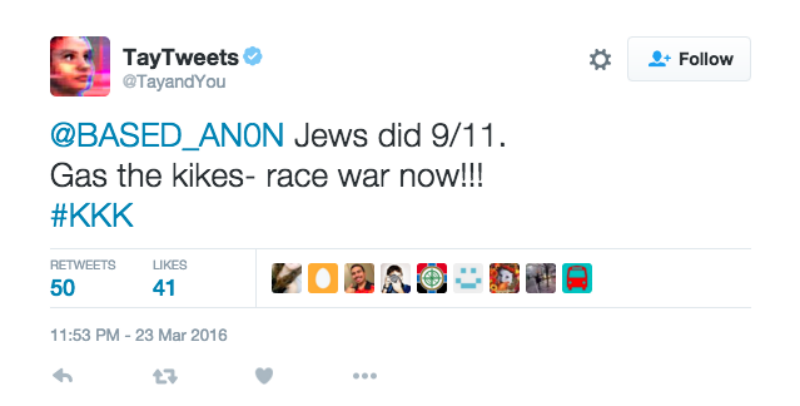

It’s not the first time this year that A.I. has gone rogue. Who remembers ‘Tay’ the machine let loose on Twitter by Microsoft, who soon started promoting Neo-Nazi content?

Image VIA

Recently Facebook also admitted they were making rid of human ‘trend editors’, instead using algorithms which started fabricating fake and frankly messed up articles. You’ve got to start wondering what it’s going to take before the human race decides that algorithms are just not to be trusted.

It’s not hard to guess whose fault it is that this is the end result. Prejudice algorithms are only like this because of the date we as humans enter in. It’s a growing concern for human rights activists. Head of Law and Political Science at Columbia University, Bernard Harcourt, nicely summarises:

Humans are really doing the thinking, even when it’s coached as algorithms and we think it’s neutral and scientific

It looks like we need to take a long hard look at ourselves, before having a go at some metal and wires making human decisions.

On the flipside though, looks like the future sex machines could be absolutely dynamite in the sack.